The Job Life Cycle on a cluster

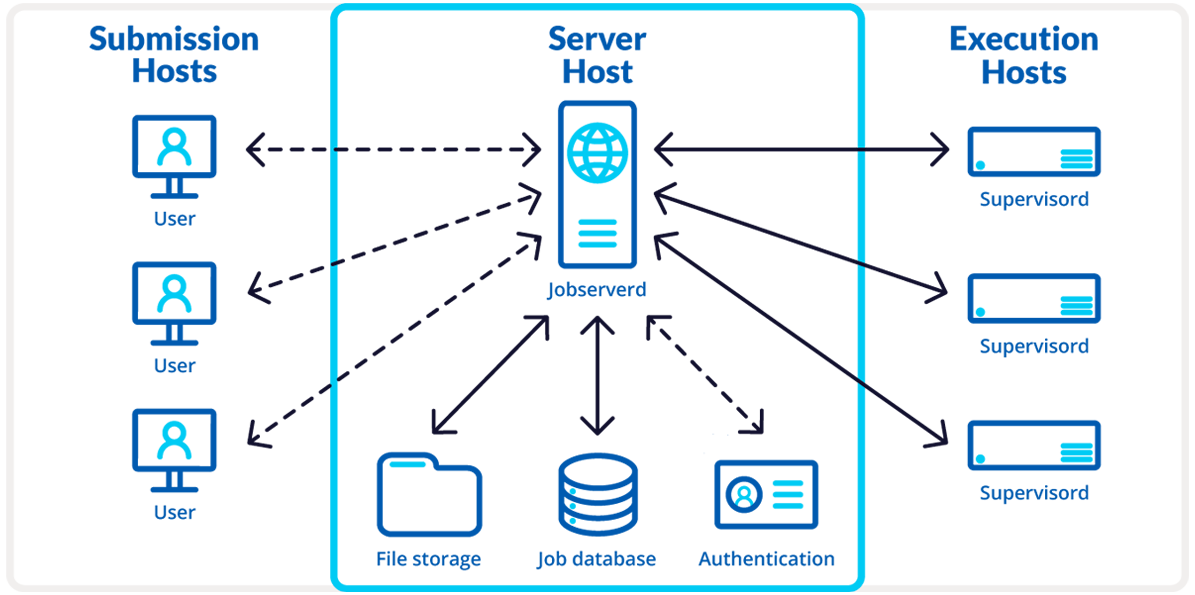

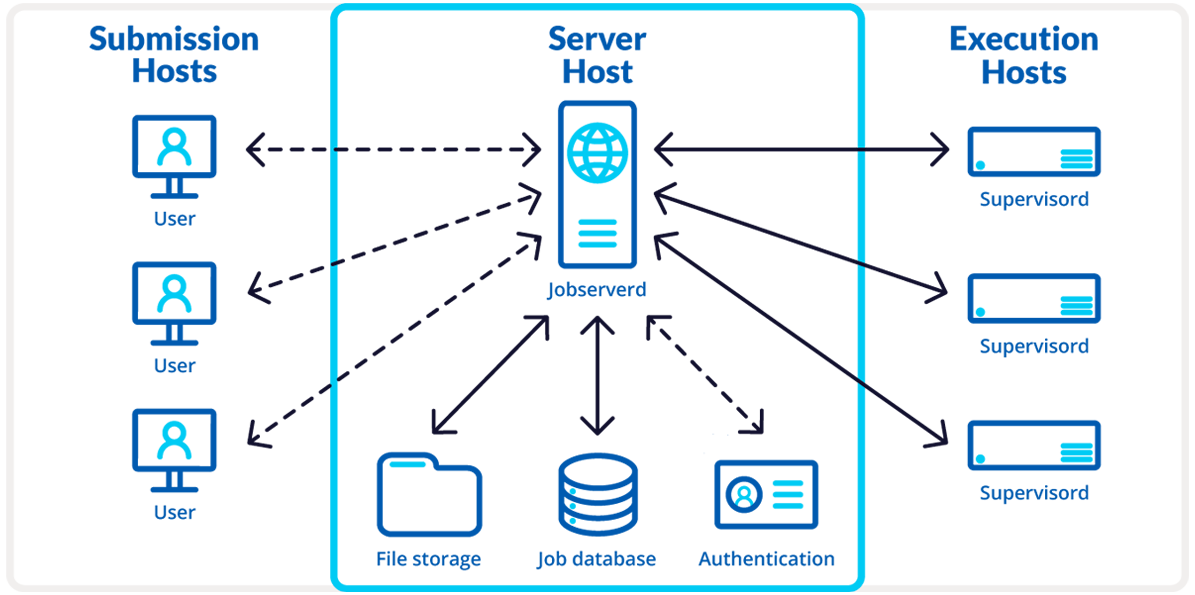

The Job Server infrastructure enables you, a user of the Schrödinger Suite, to submit scientific computations (jobs) to remote resources and manages the running of those jobs. Jobs can be submitted to a cluster with a queuing system. In this case, the Submission Host is the local computer (physical or virtual) you are using to submit the job. The Server Host and Execution Hosts are machines on the cluster. A Job Server on the cluster manages the process. To use a Job Server to submit to a cluster, you have to first register with your credentials from your local computer.

It is useful to understand how Schrödinger Suite jobs are run in order to contextualize how to utilize Job Server.

The "life cycle" of a job can be summarized as follows:

-

A job is submitted from a Submission Host. This is the computer where your local Schrödinger Suite software instance is installed. Jobs can be submitted from both Maestro and the command line.

-

The Submission Host sends the job to the Server Host. The Server Host is where the Job Server process (jobserverd) runs, and it is what manages the job submission from both Maestro and the command line and transfer of the input and output files of a job. The job enters “Waiting” status.

Job Server on the Server Host submits the jobs to the queuing system (e.g., Slurm).

-

Job Server on the Server Host submits the jobs to the queuing system (e.g., Slurm).

-

The queuing system dispatches the batch job to an Execution Host.

-

A scratch directory for the job is created on the Execution Host.

-

Input files are transferred from Job Server to the Execution Host scratch directory.

-

The job runs in the scratch directory on the Execution Host and enters “Running” status.

-

For many distributed Schrödinger applications, this initial job is a “driver” that can submit subjobs back to Job Server. That is, the Execution Host can also be a Submission Host.

-

While the job is running, its log file and optionally other streaming files are uploaded periodically from the Execution Host to Job Server on the Server Host.

-

After the job has completed (successfully or not), output files are uploaded from the Execution Host to Job Server on the Server Host.

-

The job scratch directory on the Execution Host is removed, and the job enters a final “Completed” status, or one of “Failed”, “Stopped”, or “Canceled” if the job did not finish normally.

-

The job output files are downloaded from Job Server to the Submission Host when requested, or automatically for subjobs launched by a parent job.

-

Job files are deleted from the Job Server file store once the output files have been downloaded.

-

Completed jobs are removed from the Job Server database after 14 days, by default.

-

The removal of undownloaded files from the file store and job info from the database are controlled by several cleanup parameters in the Job Server configuration (see <link_to_advanced_js_options>). These apply only to the top-level job of a job hierarchy; subjobs, subjobs, etc. get cleaned up when the top-level job gets cleaned up.

|

|